Authored by Levi C. Webb

Several U.S. states are continuing to expand restrictions on social media access for minors as lawmakers respond to ongoing concerns about online safety and youth mental health.

Lawmakers across multiple states are introducing and advancing legislation that limits how minors can access social media platforms, reflecting a broader push to regulate digital environments that were previously left largely ungoverned.

These efforts are being driven by growing concern among policymakers and parents about the effects of prolonged social media use on younger users. Ongoing discussions around screen time, content exposure, and platform design have led to increased scrutiny of how social media companies engage with minors. As a result, lawmakers are focusing on measures that require parental consent, restrict certain features, or impose time-based access limits for younger users.

Several states have already moved forward with specific legislation. Utah enacted laws requiring parental consent and limiting overnight access for minors (https://le.utah.gov/~2023/bills/static/SB0152.html), while Arkansas passed regulations aimed at age verification and parental control requirements (https://www.arkleg.state.ar.us/Bills/Detail?id=SB396&ddBienniumSession=2023%2F2023R). California has taken a broader approach through its Age-Appropriate Design Code Act, which focuses on how platforms design and present content to younger users (https://leginfo.legislature.ca.gov/faces/billTextClient.xhtml?bill_id=202120220AB2273). These laws show how states are approaching the issue from different regulatory angles.

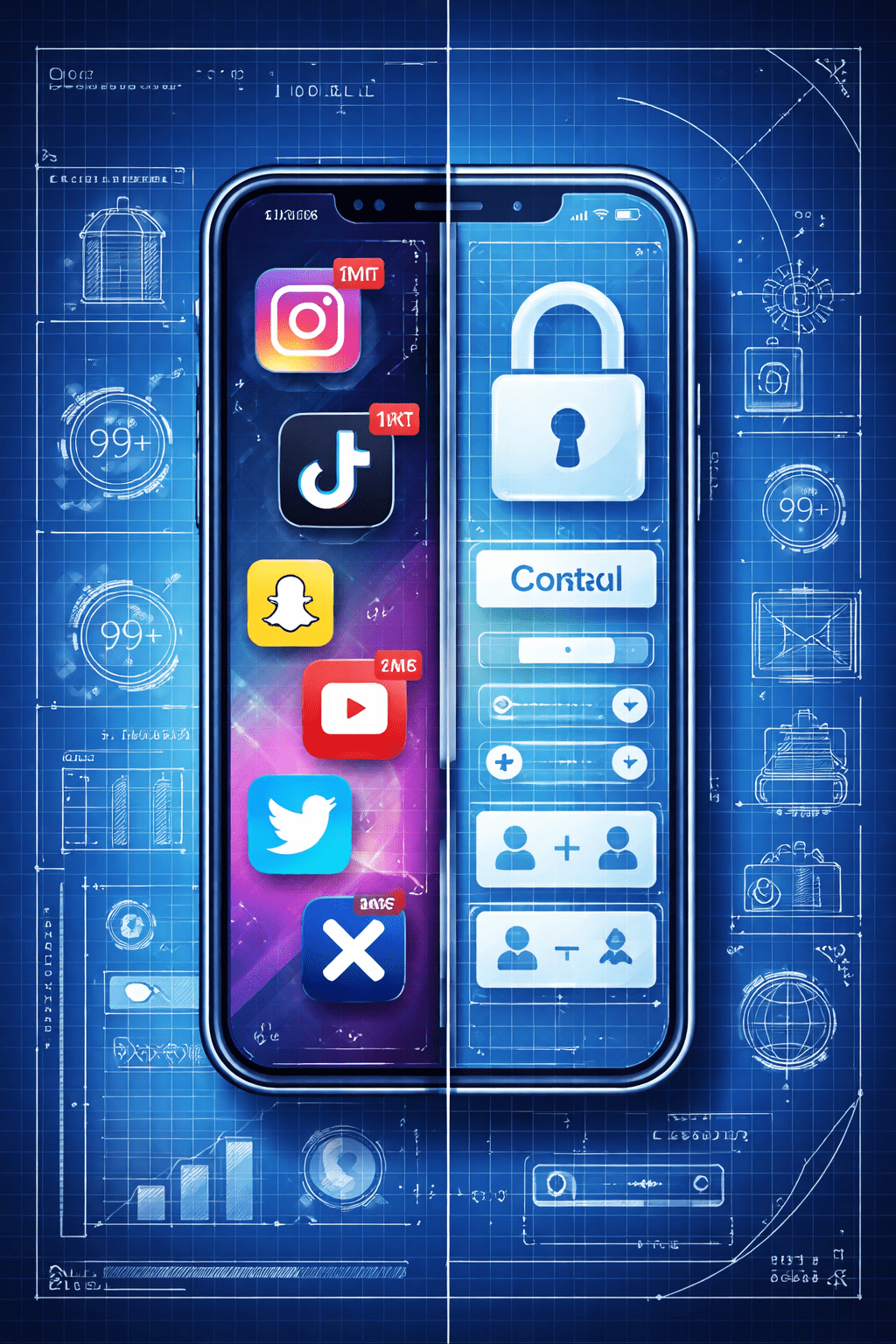

The legislative approach varies by state, but common elements include age verification requirements and restrictions on data collection practices for minors. Some proposals also seek to limit algorithm-driven content exposure, particularly content that may contribute to harmful behavioral patterns. These efforts reflect a broader attempt to shift responsibility onto platforms while also giving parents more control over how their children interact with digital spaces.

Technology companies have pushed back against some of these proposals, raising concerns about implementation challenges and potential privacy implications. Critics argue that enforcing age verification at scale introduces technical and legal complications, particularly when it comes to protecting user data. At the same time, companies are beginning to introduce their own safety features, suggesting that regulatory pressure is influencing product design.

The impact of these policies is already beginning to shape how platforms operate, especially in regions where new rules are being tested or implemented. Companies are adjusting account settings, introducing new parental controls, and modifying user experiences to align with evolving regulatory expectations. These changes indicate that even partial adoption of these laws can have broader industry effects.

Public response remains mixed, with some viewing the restrictions as necessary safeguards and others expressing concern about overreach and enforcement feasibility. The debate highlights the difficulty of balancing user safety with digital access, particularly as technology continues to evolve faster than regulatory frameworks.

The continued expansion of youth-focused social media restrictions reflects a broader shift in how governments are approaching digital regulation, as policymakers move toward more active oversight of online platforms and their societal impact.

- • • • •

Reporting and writing by Levi C. Webb. AI tools were used selectively to assist with research and editorial support.

© 2026 Fat Wagner LLC. All rights reserved.

Leave a comment